Skeleton

In many computer vision applications we often have to deal with huge amounts of data: processing can therefore be slow and requires a lot of memory.

In order to achieve faster processing and a smaller memory footprint, we sometimes use a more compact representation called a skeleton.

A skeleton must preserve the structure of the shape but all redundant pixels should be removed.

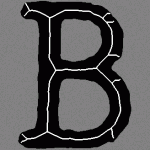

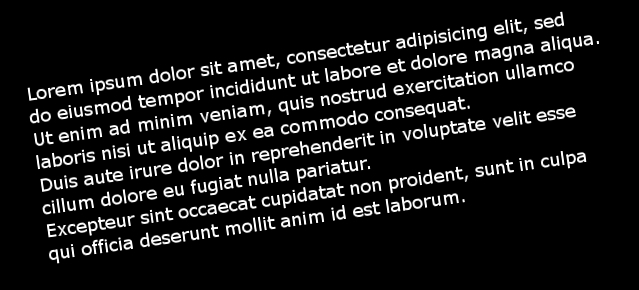

Here is a skeleton of the letter "B":

In this article we will present how to compute a morphological skeleton with the library OpenCV.

The skeleton obtained is far from perfect but it is a really simple method compared to other existing algorithms.

Pseudocode

As described on Wikipedia, a morphological skeleton can be computed using only the two basic morphological operations: dilate and erode.

In pseudo code, the algorithm works as follow:

img = ...; while (not_empty(img)) { skel = skel | (img & !open(img)); img = erosion(img); } |

At each iteration the image is eroded again and the skeleton is refined by computing the union of the current erosion less the opening of this erosion. An opening is simply an erosion followed by a dilation.

Implementation

It's really straightforward, first load the image to process in grayscale and transform it to a binary image using thresholding:

cv::Mat img = cv::imread("O.png", 0); cv::threshold(img, img, 127, 255, cv::THRESH_BINARY); |

We now need an image to store the skeleton and also a temporary image in order to store intermediate computations in the loop. The skeleton image is filled with black at the beginning.

cv::Mat skel(img.size(), CV_8UC1, cv::Scalar(0)); cv::Mat temp(img.size(), CV_8UC1); |

We have to declare the structuring element we will use for our morphological operations, here we use a 3x3 cross-shaped structure element (i.e. we use 4-connexity).

cv::Mat element = cv::getStructuringElement(cv::MORPH_CROSS, cv::Size(3, 3)); |

And now the core of the algorithm, the main loop. We need a boolean variable in order to check if there is at least one pixel remaining. Operations are done in-place when possible.

bool done; do { cv::morphologyEx(img, temp, cv::MORPH_OPEN, element); cv::bitwise_not(temp, temp); cv::bitwise_and(img, temp, temp); cv::bitwise_or(skel, temp, skel); cv::erode(img, img, element); double max; cv::minMaxLoc(img, 0, &max); done = (max == 0); } while (!done); |

The use of the minMaxLoc function deserves an explanation. We want to check if there is still at least one pixel in the image, unfortunately I have not found a function for this task in OpenCV, therefore I just check if the maximum value is 0. minMaxLoc stores the minimum value in the second parameter (ignored if NULL pointer) and the maximum in the third parameter. A short-circuit OR function would be nice for this task.

The loop is over, we have our skeleton, let's display it!

cv::imshow("Skeleton", skel); cv::waitKey(0); |

Results

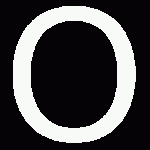

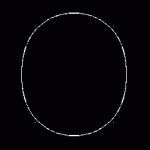

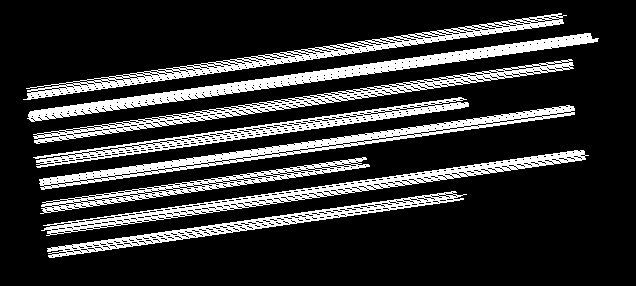

On a big "O":

On the word "OpenCV":

Optimization

As discussed with Arthur Kalverboer in the comments below, it is possible to optimize the computation in several ways.

First of all we can notice we perform the open operation and just after we perform an erosion on the same image, but an opening is just an erosion followed by a dilation, so we can perform the erosion and save it to a new image eroded, and at the end of the loop we copy eroded to img.

The second optimization concerns the use of cv::minMaxLoc in order to check if an image still has white pixels, computing the norm (cv::norm) of the image is faster.

EDIT2: Abid Rahman told me the function 'cv::countNonZero' is even faster, I didn't know this function existed, thanks!

Finally the last optimization is to replace the and and not operations by a simple set difference operation (cv::subtract). This works because we only manipulate binary images.

Here is the updated code:

cv::threshold(img, img, 127, 255, cv::THRESH_BINARY); cv::Mat skel(img.size(), CV_8UC1, cv::Scalar(0)); cv::Mat temp; cv::Mat eroded; cv::Mat element = cv::getStructuringElement(cv::MORPH_CROSS, cv::Size(3, 3)); bool done; do { cv::erode(img, eroded, element); cv::dilate(eroded, temp, element); // temp = open(img) cv::subtract(img, temp, temp); cv::bitwise_or(skel, temp, skel); eroded.copyTo(img); done = (cv::countNonZero(img) == 0); } while (!done); |

Also, don't forget to crop your images before processing. The two images I gave as examples are not cropped, cropping them (manually or using OpenCV) also improves execution time.